Hi everyone,

Last week a good friend told me my newsletters have been a little scary lately. Too many layoff bits, too much "what is happening to work?" Fair point.

I am taking that to heart. I don't want to make the world feel scarier than it is! In fact, this week's essay is partly about why the replacement story is more complicated than the headlines suggest.

The thought stuck in my head this week was more practical: why have Claude Code and Opus 4.7 felt more expensive and sluggish lately, while Codex and GPT-5.5 feel faster and easier to use? A few articles and podcasts helped clarify it. Underneath the model race, a lot of this comes back to money, capacity, and subsidies going away.

Signals This Week

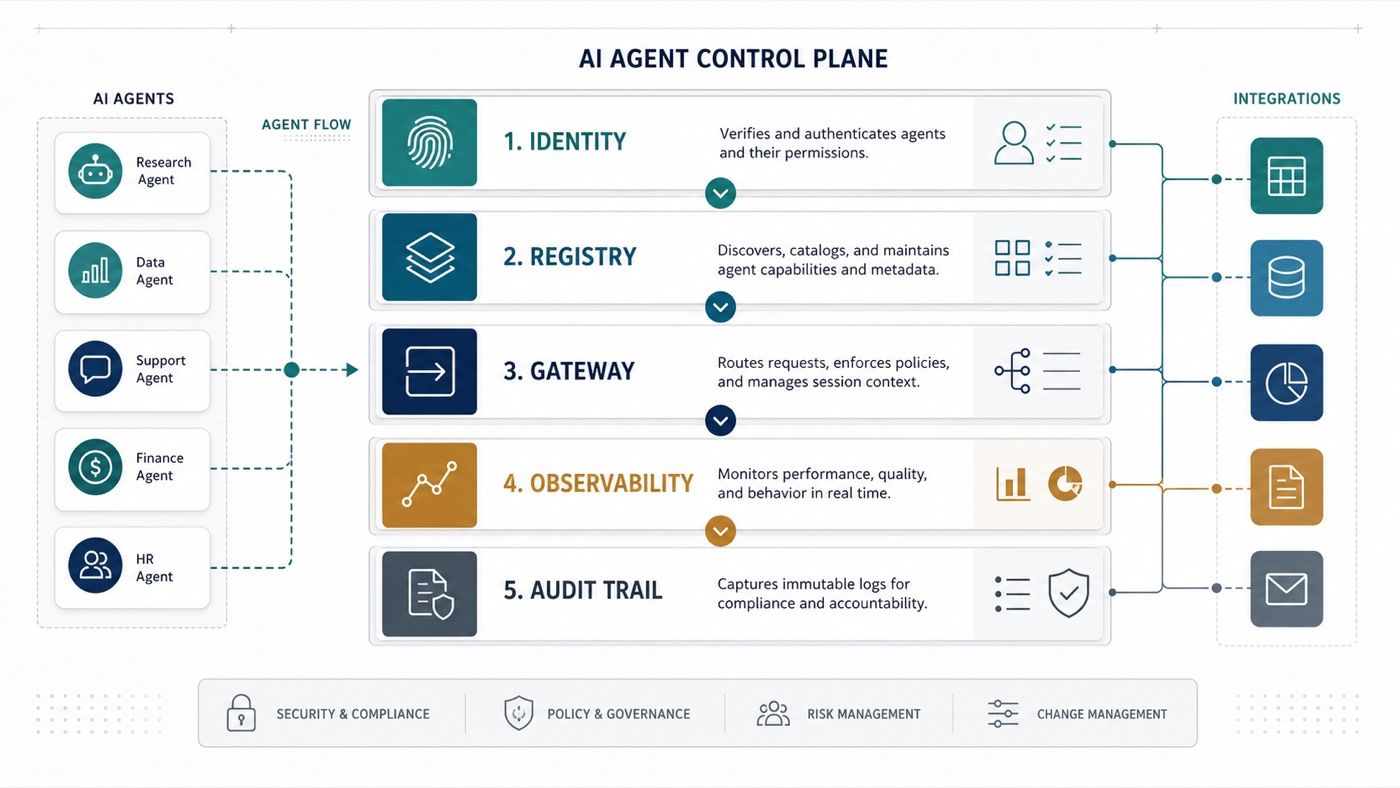

Agent control planes became a real category. Google Cloud Next and Mistral Workflows both pointed in the same direction: the market is moving from "build an agent" to "operate a fleet of agents." Registry, identity, gateways, observability, approvals, and audit trails are becoming the product.

The AI cost story got less simple. GitHub's pricing change, Anthropic throttling, OpenAI's compute buildout, and The Economist's supply chain reporting all complicate the "AI is cheaper labor" story. Unit costs may fall, but total spend can rise when every workflow starts using bigger context, more retries, and more agent loops.

The first request is often not the real problem. Several operating conversations this week had the same shape: a tool request turned out to be a problem of getting data into the right workflow; a "flip the switch" request hid architecture dependencies; a pile of tickets hid missing decision lanes. AI can make that worse if teams automate requests before they understand the decisions underneath.

🎯 Main Theme: The AI Replacement Math Got Less Clean

I understand why the replacement story is attractive. I have been talking to executives who are getting their first real exposure to analyst-style AI tools. A CFO sees Claude summarize reports and pull signal out of email. A COO sees it turn scattered operational updates into a daily digest. For the first time, the AI feels like a real coworker, not just a chatbot.

Once you see that, the spreadsheet practically writes itself. A junior analyst costs this much. A subscription costs that much. Why not replace some human work with cheaper AI work?

Some of that math will hold. This is not a story about AI failing. But the spreadsheet has a hidden assumption: AI stays cheap, available, and reliable as usage scales.

That assumption is starting to look shaky.

The simple way to say it is this: AI work is not magic labor. It is inference. Every long prompt, document upload, agent loop, retry, tool call, and generated answer consumes compute somewhere. Most business users don't see that because the product hides it behind a chat window or a monthly subscription.

That is the part of the replacement math we have been undercounting.

The frontier companies are buying scarce compute

Look at what the biggest players are doing, not what they are saying in product demos.

OpenAI wrote on April 29 that it has passed its original 10GW Stargate commitment, with more than 3GW added in the last 90 days. Amazon's April 29 Q1 earnings release says OpenAI has committed to consume about 2GW of Trainium capacity beginning in 2027, while Anthropic has secured up to 5GW. Anthropic said on April 20 that it is committing more than $100 billion over ten years to AWS technologies.

Google and Microsoft are telling the same story from different angles. Sundar Pichai wrote during Google Cloud Next that Google Cloud's first-party models now process more than 16 billion tokens per minute through direct API customer use. Microsoft's FY26 Q3 release says its AI business passed a $37 billion annual revenue run rate, while AI infrastructure and GitHub Copilot usage helped drive a 47% increase in Intelligent Cloud cost of revenue.

That matters because this is the input cost behind the AI replacement story. If AI labor is really compute labor, then replacement depends on the price, availability, and reliability of compute. A CFO should hear budget volatility. A CIO should hear capacity planning. A COO should hear operational dependency. A CMO or CRO should hear a warning that more output is not the same as better pipeline, better conversion, or better customer experience.

Scarcity is already showing up in the product

This is not just a capital markets story. It is already showing up in everyday usage.

Axios's Madison Mills wrote on April 2 that Anthropic's server capacity was not keeping pace with demand, leaving paying customers stuck with usage limits and outages. I feel that one personally. Claude is still excellent for a lot of thinking and writing work, but the last few weeks have made availability and speed part of my own tool choice. Codex is pulling more of my workflow because it is faster in places, more integrated into the work loop, and seems to be benefiting from OpenAI's capacity bet.

Tom's Guide tracked a Claude outage on April 28, while Anthropic's own status page confirmed Claude.ai availability issues and elevated API errors. One outage does not prove a structural shortage. But repeated limits and short interruptions change how operators think. A tool that replaces work has to be available when the work needs doing.

GitHub made the cost side explicit. Mario Rodriguez, GitHub's chief product officer, wrote on April 27 that Copilot's premium request model was no longer sustainable as it moved into longer agentic coding sessions. Starting June 1, GitHub says Copilot will move toward usage-based billing, where heavier model use consumes more credits. For annual Copilot Pro and Pro+ users who stay on the old request-based plan, GitHub's own table shows one Claude Opus 4.7 interaction will consume 27 premium request units instead of 15. In plain English: the heavier model burns through the allowance faster.

That is a pricing signal every operations team should notice.

For a director of business applications or a program manager, this is the practical lesson: the tool you tested in a pilot may not have the same economics when 200 people use it daily, when the workflow runs longer, or when the vendor changes the billing model. A pilot proves capability. It does not automatically prove unit economics.

Efficiency helps, but it may not solve the problem

There is an important counterargument here: models and infrastructure are getting more efficient.

OpenAI is already showing both sides of the efficiency story. Its GPT-5.5 launch post says the model uses fewer tokens for the same Codex tasks, and that Codex helped improve load-balancing heuristics, increasing token generation speeds by more than 20%. Its Codex enterprise post says weekly Codex users grew from more than 3 million in early April to more than 4 million two weeks later. That is the tension in one company: better efficiency lowers the cost of a task, but better products can expand usage faster than the savings arrive.

That is exactly how cloud got big. Unit costs fell, adoption rose, and the total bill still became a CFO problem.

The energy layer makes this harder to wave away. The International Energy Agency's Energy and AI report estimates data centers used about 415 terawatt hours of electricity in 2024, roughly 1.5% of global electricity consumption. Goldman Sachs Research forecast that global data center power demand could rise as much as 165% by 2030, while Semafor reported that grid constraints and equipment shortages could delay 30% to 50% of data center projects in 2026.

That does not sound like an overnight problem.

It also means "wait and prices will fall" is not a full strategy. Prices may fall for individual tasks. Total spend can still rise if every team starts running bigger workflows more often.

Replace tasks before roles

This is the part the usual "AI replaces people" line skips.

If AI is abundant and cheap, you can be sloppy. Point it at everything. Replace first, optimize later. But if AI capacity is expensive, metered, and sometimes unreliable, the replacement decision needs more care.

Edward Targett at The Stack reported on April 20 that Goldman Sachs analysts found many companies overrunning initial AI inference budgets by orders of magnitude. In one software company Goldman surveyed, inference costs were already approaching 10% of engineering headcount cost, and Goldman expected those costs to reach parity with total engineering headcount cost soon. That is not a reason to stop using AI. It is a reason to stop pretending the cost side is trivial.

Some tasks will move to AI: summaries, first drafts, basic classification, routine research, code migrations, test generation, support triage, and daily briefings where the quality bar is clear.

Other work is less obvious: judgment-heavy decisions, messy coordination, high-trust analysis, and work where the expensive part is not producing text but knowing what matters. In those cases, AI may still be valuable, but more as a multiplier for the human than a clean substitute for the role.

That is probably the healthier answer anyway. Replace tasks before roles. Measure cost per useful output, not just subscription price. Ask how much human review is still required, and what happens when the model is throttled, down, repriced, or moved behind a higher tier.

For practitioners, the work starts smaller than the strategy memo. Pick one workflow and write down the real chain: inputs, model used, context size, retries, human review time, exception path, and business result. The point is not to slow down experimentation. It is to know which experiments are actually earning their complexity.

The question to ask now

The first wave of AI adoption made work look automation-ready. The capacity crunch reminds us that automation still has economics.

The practical question for leaders is not "can AI do this?" It is "can AI do this reliably, at the right cost, with the right level of human judgment still attached?"

That is why I think this becomes a two-part story. This week is the replacement math. Next week is the operating discipline: the TokenOps or AI FinOps role that starts to look a lot like CloudOps did a decade ago.

The demo may have been subsidized. The operating model won't be.

📡 The Wire

Google Cloud named the agent control plane. At Google Cloud Next '26, Google announced the operating layer around agents: development tools, registry, gateway, identity, observability, runtime, A2A protocol support, and Workspace MCP. The pattern matters more than the feature list. Enterprise agent procurement is starting to sound like infrastructure procurement: identity, audit trail, runtime, and cost visibility first. (Google, Google Cloud, Google Cloud)

DeepSeek made the cost question harder. DeepSeek's new V4-Pro and V4-Flash models push against the "AI is getting expensive" story. TechCrunch reported that both are open-weight mixture-of-experts models with 1 million token context windows. VentureBeat framed V4-Pro as near frontier quality at much lower cost. The catch is risk: Reuters reported a US State Department warning about alleged AI intellectual property theft by DeepSeek and other Chinese firms. Model routing now means cost, quality, data exposure, vendor geography, and compliance in one decision.

The capacity crunch has a supply chain chart now. The Economist put a clean number on the bottleneck: since 2024, the five hyperscalers increased combined capital spending from $234 billion to $677 billion, up 190%, while the 50 largest hardware suppliers they depend on increased capital spending from $153 billion to $223 billion, up 45%. That gap helps explain queues, throttling, pricing changes, and availability constraints. Software moves fast. Chips, memory, power, cooling, and grid connections do not. (The Economist)

💬 Overheard

Flip side of doom and gloom: there will always be demand that can't be met.

Which is, somehow, another kind of gloom.

📚 What I'm Consuming

▶️ Claude Code Token Cheatsheet. Practical walkthrough on being more economical with tokens in Claude Code. Useful companion to this week's cost theme.

🎙️ The AI Subsidy Era is Over. NLW connects GitHub's consumption-pricing shift to the end of flat-fee AI subsidies and the rise of AI cost discipline.

🗞️ The irresistible rise of authorial AI. The FT's sharper worry is not fake prose. It is fluent writing with weaker thinking underneath.

🌙 After Hours

Double Feature: Six Days of the Condor + Three Days of the Condor

Book: Six Days of the Condor by James Grady (1974) | ★★★★★

Film: Three Days of the Condor, dir. Sydney Pollack (1975) | 117 min | ★★★★

I watched Three Days of the Condor many years ago, and it is still one of my all-time favorites. It has that perfect paranoid-thriller feel: tight, smart, and tense the whole way through. Redford is great, the conspiracy machinery is great, and the whole thing just moves.

Six Days of the Condor was very good too. Part of the fun is seeing how closely Three Days of the Condor follows the book at first, then compresses the six-day premise into a sharper three-day movie. I liked Malcolm's bookworm quality, the battle of wits, and the conspiracy angle. But the middle and ending of the book felt more like old James Bond novels to me: more action, less paranoid machinery.

The film is the better version for me. It gives Faye Dunaway's Kathy a stronger role, lands the ending better, and feels cleaner overall. Still, the book is a real thriller and absolutely worth reading if you love the movie.

🎙️ Listen

Prefer to listen? Quanta Bits is also available on Apple Podcasts and Spotify.

How this gets made

I collaborate with Spock, my AI agent. He researches extensively: scanning, filtering, and surfacing what's relevant across my business. I read, listen, and watch what resonates, and decide what matters. I provide direction, we draft together. The editorial judgment is mine. He'd tell you the same. Most logical.