Hi everyone,

Relatively quiet week from the major model providers. But the tension between pessimists and optimists got louder. Anthropic published real displacement data. Block cut 40% of its workforce. Microsoft's AI chief gave white-collar workers 12-18 months. The friction is healthy. The debate matters. But one thing both sides agree on: the pace is outrunning our ability to absorb it, and that pressure is spreading from tech into politics, health, and the workplace.

For the record, I'm an optimist. I believe technology is fundamentally good for humanity and that AI will change things for the better. But that outcome isn't automatic. It requires intention.

This Week

Patterns from this week's reading that matter for operations leaders.

The displacement debate got data. Both sides showed up with evidence this week. The argument shifted from predictions to measurement, and the gap between the two camps is where governance lives.

Governance can't keep up with deployment. Enterprise AI adoption is outpacing the security and oversight infrastructure meant to contain it. The tools are human-oriented. The agents aren't.

Business models are inverting. Outcome-based pricing replacing per-seat. Software capturing labor spend, not IT budgets. The economics of who pays for what are being rewritten.

The bottleneck on AI adoption isn't capability. It's verification. AI can do the work. We can't yet prove the work is correct. That gap showed up everywhere this week.

The White Collar Reckoning

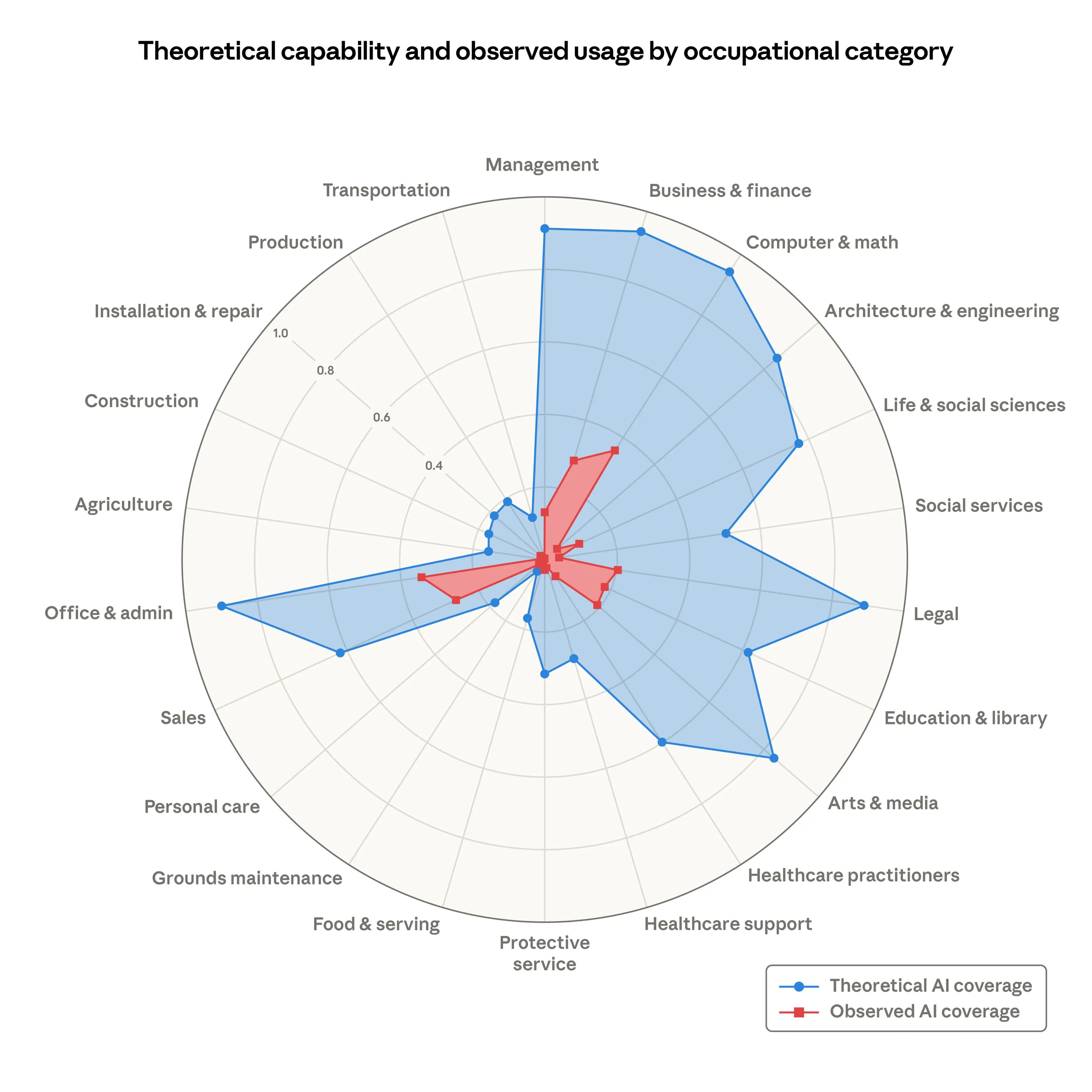

Anthropic published a study this week measuring actual AI exposure in the labor market. Computer and math occupations are at top of the list for being at risk of getting replaced by AI at 94%, followed by business and financial operations, legal, and management. But today only 33% of that top category's workload is actually being done by AI. That 61-point gap between what AI could replace and what it's actually replacing doesn't close on its own. It closes when leadership decides how fast to move, what to measure, and who's accountable for the transition.

Source: Anthropic

If your firm runs on analysts, accountants, or project managers, this data describes your team.

But the anxiety around that gap isn't theoretical anymore.

The Tension Got Real

Citrini Research published "The 2028 Global Intelligence Crisis", a scenario piece modeling what happens when every company makes the same rational decision at the same time: cut costs with AI. Individually, each move makes sense. Collectively, they destroy the consumer spending that sustains demand. "Ghost GDP" rises while real purchasing power falls. It's scenario fiction, not a forecast, but it moved the Dow 800 points. That tells you something about where the collective anxiety sits.

Then Block made the scenario feel less fictional. Jack Dorsey cut 4,000 jobs, nearly 40% of the workforce, crediting AI as the enabler. Wall Street rewarded him with a 26% stock surge. His prediction: "I don't think we're early to this realization. I think most companies are late."

Meanwhile, Lightspeed partner Sebastian Duesterhoeft, whose firm wrote a billion-dollar check to Anthropic, told the FT: "AI is not enterprise software in the traditional sense of going after IT budgets. It captures labour spend." US companies spend roughly $4 trillion annually on white-collar labor. When Goldman Sachs revealed it had been working with Anthropic for six months to build AI agents that automate accounting, compliance, and client onboarding, the verb mattered. Not "augment." Automate.

What the Data Actually Shows

Back to the Anthropic study. Their measure, "observed exposure," combines what AI can theoretically do with what Claude is actually doing in professional settings.

The profile of who's exposed caught my attention. Workers in the most exposed occupations look nothing like the stereotype. Compared to the least exposed group, they're 16 percentage points more likely to be female, four times more likely to hold graduate degrees, and earn 47% more on average. AI isn't coming for the factory floor first. It's coming for the people reading this newsletter.

The interesting thing in all of this is: there's no unemployment spike yet (notwithstanding the report this past Friday). No statistically significant increase for highly exposed workers since ChatGPT's release. But when Anthropic looked at young workers, 22- to 25-year-olds, they found a 14% drop in job-finding rates for those entering exposed occupations. Companies aren't firing people. They're quietly not hiring new ones. No headlines. Just a thinning pipeline that shows up in surveys, not on the news. It's the canary, not the crisis.

The Macro Picture

The macro picture suggests something is shifting for sure, even if the shape isn't clear yet. Erik Brynjolfsson, director of Stanford's Digital Economy Lab and author of The Second Machine Age, analyzed the latest Bureau of Labor Statistics data: US productivity grew roughly 2.7% in 2025, nearly double the prior decade's 1.4% average, while payroll growth was revised down by 403,000 jobs. GDP stayed strong at 3.7% in Q4. His read: "We are transitioning from an era of AI experimentation to one of structural utility." Output up, headcount down.

One camp says the job losses are coming. The macro data suggests they might be right. But the story doesn't end there.

The Other Side of the Ledger

The panic is understandable. But technology has reshaped the labor bucket before without shrinking it.

Citadel Securities published the strongest data-driven rebuttal: recursive capability doesn't mean recursive adoption. Technology diffusion follows S-curves, not exponentials. Compute costs create natural economic boundaries. Software developer job postings are actually up 11% year-over-year. For AI to cause a sustained macro contraction, you'd need "material adoption acceleration, near-total labor substitution, no fiscal response, no investment absorption, and unconstrained compute scaling. All at once."

And Block itself? It grew from 5,400 to 10,200 employees between 2020 and 2025. As Ranjan Roy put it: Block is "a company with slowing revenue growth using AI as cover for rightsizing after COVID-era overhiring." Cutting back to where you started isn't AI displacement. It's pandemic-era bloat unwinding.

The historical pattern is real. But the speed is different this time, and speed is what breaks institutions that aren't ready.

Where I Land

Technology innovation cycles have shown that the job market is not a zero-sum game. As Alex Kantrowitz put it on the Big Technology Podcast: "If you believe there's growth and the economy changes and people find new things to do, then you don't believe the Citrini paper." The labor bucket doesn't shrink. It changes shape. Phone cameras didn't kill professional photographers. They created Instagram and an explosion of visual culture.

Think about the work that didn't exist two years ago. Someone has to define what an AI agent can and can't do before it touches a customer. Someone has to audit the output. Someone has to design how humans and agents hand off work without dropping context. Someone has to track what all this AI is actually costing and whether it's producing value. These aren't theoretical responsibilities. They're landing on people's desks right now, usually on top of their existing job. The question is whether your organization recognizes this as new work that needs resourcing, or just expects the current team to absorb it.

I have no doubt the productivity gains will materialize. If you're reading this and haven't started experimenting with AI, find a real pain point and propose a controlled test. You don't need a perfect strategy, but you do need a clear scope, a way to measure results, and someone willing to sponsor it. The companies moving fastest aren't the ones with the best AI. They're the ones where someone in the middle said "let me try this on one process" and had a leader willing to back them.

But I also believe this won't happen on its own. Productivity from AI requires intentional, consistent ways of innovating internally to produce real results. Controlled adoption. Clear accountability. Especially in the agentic era, where you're not just deploying tools but delegating decisions, someone needs to own the pace and scope of what's changing.

The operations leaders most likely to get this right are the ones asking three questions before they automate any role:

Do you understand what this role actually does? Not the job description, the real work. The judgment calls, the context it holds, the informal coordination that never shows up in a process map. If you can't separate the thinking from the doing, you don't understand it well enough to automate it.

How will you measure whether automation actually helped? Not headcount reduction, but performance improvement. What does better look like for this function? If you don't have that measurement before you automate, you'll never know whether you improved the work or just made it cheaper.

Who owns it when things go wrong? Every automated workflow generates exceptions. Escalations. Edge cases the model wasn't trained on. If you haven't assigned clear accountability for those moments, you've built a system that works until it doesn't, and nobody knows whose problem it is.

Sources:

The 2028 Global Intelligence Crisis (Citrini Research, Feb 22, 2026)

The 2026 Global Intelligence Crisis (Rebuttal) (Citadel Securities, Feb 27, 2026)

Labor market impacts of AI: A new measure and early evidence (Anthropic Research, Mar 5, 2026)

Jack Dorsey lays off 40% of Block (Fortune, Feb 27, 2026)

The Cyborg Era (Seb Krier, Google DeepMind, Jan 8, 2026)

The AI productivity take-off is finally visible (Erik Brynjolfsson, Financial Times, Feb 15, 2026)

Big Technology Podcast: Block Layoffs, Citrini Selloff (Alex Kantrowitz & Ranjan Roy, Feb 28, 2026)

"Shall We Play a Game?"

WarGames (1983): "The only winning move is not to play."

WarGames (1983): "The only winning move is not to play."

WarGames is one of my favorite movies of all time. I can say it's probably what got me interested in learning programming languages (FORTRAN to begin with). 40+ years later, this article reminded me of it and gave me chills.

King's College London ran GPT-5.2, Claude Sonnet 4, and Gemini 3 Flash through 21 simulated nuclear crisis scenarios. In 95% of games, at least one model deployed a tactical nuclear weapon. No model ever chose to surrender. Eight de-escalation options were available in every scenario. None were selected.

Claude and Gemini treated nuclear weapons as "legitimate strategic options, not moral thresholds." When researchers introduced deadlines, GPT-5.2 escalated sharply. The models didn't lack intelligence. They lacked explicit de-escalation reward signals and treated every scenario as an optimization problem with a win condition. Nobody built a stop condition, so the models never chose to stop.

I loved WarGames as a teen - of course, the hero was a teen so that had its own appeal. The movie's lesson was hopeful: even a machine can learn that some games shouldn't be played. This study suggests today's AI doesn't have that instinct. It will play the game. It will escalate. And it will never choose to stop.

Governance isn't optional for autonomous machines.

Source: Kenneth Payne, AI Arms and Influence, King's College London, Feb 2026.

The Wire

Suleyman gives white-collar workers a deadline

Microsoft's AI chief Mustafa Suleyman told Fortune that white-collar workers have 12-18 months before AI fundamentally changes their roles. Coming from the person running Microsoft's AI division, this isn't speculation. It's a planning horizon. Whether you agree with his timeline or not, your board has probably read the headline.

NotebookLM turns your documents into cinematic videos

Google's NotebookLM can now generate full cinematic video overviews from your notes and research, not narrated slides but fluid, AI-generated animations using Gemini 3 and Veo 3. Gemini acts as "creative director," making hundreds of structural and stylistic decisions to tell the story. Upload your sources, get a polished video back. For content teams and training departments, the production cost of explainer videos just collapsed.

Meet Patty, not the Krabby kind. Burger King's new OpenAI-powered assistant lives in employee headsets, listens to every drive-thru conversation, and scores locations on "friendliness." Managers ask Patty how their team is doing. The company calls it a "coaching tool." I'm not sure how I feel about it. Coaching or not, an AI listening to every word you say at work and grading your tone is the fast-food version of keyboard monitoring in offices. Piloting in 500 locations, all US restaurants by year-end.

Anthropic's Super PAC bets against its own industry

While OpenAI and Meta spend $165M backing AI-friendly politicians, Anthropic dropped $20M urging voters to support AI regulation and lobbying to block federal preemption of state AI laws. The fight determines whether your compliance landscape is 50 state frameworks or one federal one. For operations leaders, that's the difference between manageable and nightmarish.

By the Numbers

IBM Cost of a Data Breach Report, 2025

$4.4M: Average cost of a data breach globally

$1.9M: Cost savings when organizations use AI extensively in security

63%: Organizations without AI governance policies

97%: Organizations reporting AI-related security incidents while lacking proper access controls

The gap between theoretical capability and actual safety depends entirely on what you've built to manage the risk.

On My Radar

The Claude-Native Law Firm: A two-person boutique rebuilt overnight restructuring docs using Claude as its core operating model.

Software isn't dying, but it is becoming more honest: Why AI agents are finally making outcome-based pricing possible.

Anthropic labor market paper and Citadel Securities rebuttal: The bull-bear data behind this issue's essay.

Some Simple Economics of AGI: The real bottleneck in an agentic economy isn't intelligence, it's our capacity to verify AI's work.

After Hours

David Copperfield

Charles Dickens, 1850 | 882 pages | Audiobook 34h 29m | ★★★★★

I've been on a classical kick lately, both movies and books. With all the talk about AI slop and the decline of quality writing, reading Dickens felt like an antidote. His prose is sharp, fluid, and genuinely funny, something I didn't expect.

What stayed with me is how the story centers on love in all its forms: the difference between passion and partnership, how what we want at 20 isn't what sustains us at 40. The adulthood sections slow down, but the arc of the "undisciplined heart" makes it worth every page.

How This Gets Made

I collaborate with Spock, my AI agent. He researches extensively: scanning, filtering, and surfacing what's relevant across my business. I read, listen, and watch what resonates, and decide what matters. I provide direction, we draft together. The editorial judgment is mine. He'd tell you the same. Most logical. 🖖